The goal of part 4 in our ExCyTIn-Bench series is to make a point that’s easy to miss amid all the hype: a naive approach to AI-augmented SOC operations doesn’t work.

If you take a state-of-the-art LLM, give it access to your SIEM (or data lake), and start asking it investigation questions, you’ll get something that looks intelligent, right up until the moment the investigation becomes multi-step, ambiguous, noisy, or requires pivots across tables you didn’t explicitly explain. At that point, the agent often stalls, thrashes, or confidently answers incorrectly.

ExCyTIn-Bench is valuable precisely because it forces this reality into the open: LLM intelligence alone does not make a SOC agent successful. What matters is whether you build a strategy for orienting the agent to your data, your business, and your investigative process.

Revisiting Microsoft’s ExCyTIn-Bench Findings: “Smarter Models” Wasn’t the Answer

Microsoft didn’t just benchmark different models. They experimented with different agent strategies: how the model reasons, how it explores data, how it recovers from dead ends, and how it reuses prior learning. Those techniques produced meaningful performance swings even when the underlying LLM stayed the same. Below is a recap of the key approaches Microsoft explored (and what they imply for real-world SOC design).

Naive (Base) Approach

This is the “default instinct” most teams start with:

- Agents use a straightforward prompt to interact with the database.

- The agent generates SQL queries, reviews results, and submits an answer.

- The answer serves as a baseline for performance comparison.

- The agent is limited in handling complex multi-step investigations, especially when it must discover schema, identify pivots, and interpret partial evidence.

In practice, the naive approach tends to restart every investigation from the same place: it has no durable orientation to your environment beyond whatever the user typed into the prompt.

ReAct (Reasoning and Acting)

ReAct is an early step toward “SOC realism”:

- It combines reasoning and action in one loop.

- The agent iteratively produces thought → query → observation → next step.

- Few-shot examples guide the structure of investigations (how to pivot, where to start, how to validate).

- This improves performance because it enables step-by-step investigation logic instead of one-shot guessing, and it makes query refinement far more consistent.

Best-of-N (BoN)

Best-of-N embraces an uncomfortable truth: LLM outputs are stochastic.

- The agent performs multiple independent trials for each question.

- It selects the best result among attempts based on reward/score.

- It improves robustness against random mistakes in query generation and reasoning.

BoN can boost accuracy—but it does so by spending more turns and more compute, which leads directly into a real-world constraint I’ll address shortly. It should be noted that Best-of-N may provide a real-world application in non-critical-path type scenarios, where speed and timeliness is not the primary concern. For example, using Best-of-N style analysis may be beneficial in performing a threat hunt, where you could allocate hours for experimentation and learning to get the results.

Reflection

Reflection extends Best-of-N by turning failed attempts into “learning” during test-time:

- The agent critiques mistakes, appends insights back into the prompt, and tries again.

- This can improve both performance and efficiency (fewer wasted attempts).

- Reflection tends to work best when combined with ReAct-style stepwise investigation.

Reflection is conceptually appealing, but it comes with important real-world caveats in production SOC operations (see below).

Expel (Experiential Learning)

Expel is the most operationally interesting of Microsoft’s techniques because it introduces reusable investigative memory:

- The agent distills rules/patterns from successful training examples.

- It retrieves similar examples during inference (external memory).

- It reduces wasted exploration by biasing the agent toward proven investigation paths.

Expel can achieve higher reward with fewer turns, but needs higher prompt/memory complexity.

This is the direction that starts looking less like a chatbot and more like a trained investigator.

What “Naive” Really Means in a SOC Agent

“Naive approach,” means something very specific: It is giving an LLM permission to query your SIEM/data lake and asking it to investigate, starting from scratch every time with only the prompt text as guidance. This approach fails because SOC investigations are not trivia questions. They are structured explorations:

- You rarely know the right table on the first try.

- You frequently pivot through intermediate entities (host → process → account → IP → related alert → sibling device).

- The right path is often procedural, not purely “knowledge-based.”

Without a strategy layer, the agent behaves like an intern who is brilliant at language, but unfamiliar with your environment, your logging quirks, your schema, and your investigation playbooks.

A Necessary Criticism: Why Reflection (and Multiple Guesses) Isn’t a Real SOC Plan

Learning from mistakes is good. But we need to be honest about how SOCs operate:

- In production, you typically don’t get multiple “guesses” for the same incident question.

- You don’t get to burn 10–30 extra query turns per alert while the agent “reflects.”

- You don’t get “graceful failure” as an expected outcome when the task is time-sensitive, regulated, or tied to a response SLA.

Reflection and Best-of-N are excellent benchmark tactics and research tools. But if your operational success depends on “we’ll try five times and pick the best,” you don’t have an investigation capability—you have a slot machine.

The Way Forward: Process Beats Technology

One of the most important takeaways from ExCyTIn-Bench is that strategy changes produced massive gains over the naive baseline, even with the same underlying LLM. While exact results vary by model and implementation details, the relative improvement trends are the key lesson:

- ReAct: ~30–40% improvement over naive

- Expel: ~40–50% improvement over naive

- Best-of-N: ~70% improvement (but relies on multiple guesses; not operationally viable as a primary strategy)

- Reflection: +15–20% over Best-of-N / ReAct (again: great for benchmarks, questionable as a frontline SOC dependency)

There are many ways to implement each technique well or poorly, and we only know what Microsoft published.

The message for CISOs planning an AI-augmented SOC is blunt:

- Dropping in a state-of-the-art LLM will not solve the problem.

- Process beats technology.

- If you want successful agents, you need an improving strategy that orients them to your environment.

How SRA Is Approaching This Challenge with SCALR AI

At Security Risk Advisors, we’re using our SCALR AI platform not just to run ExCyTIn-Bench-style tests, but to support something more important: a repeatable process for training and deploying bespoke investigative agents per client. These are agents that understand their tools, their logging reality, and their SOC workflows. While somewhat arbitrary, to define success we drew a line in the sand and felt like an 85% correct answer rate would be a good starting point to measure success. While we didn’t officially score it, there was definite value in the remaining 15% of answers contribute best-effort analysis that while not producing a perfect answer, would gather good data to outfit an analyst with a better starting point than when the question originally asked.

Our Discovery Process: Incremental Improvements, Then a Hard Wall

Our early testing mirrored the same progression many teams will follow:

- Start naive (“LLM + database access”)

- Add more structured prompting (ReAct-style)

- Add memory-like patterns (Expel-like concepts)

Each step improved results over the last. But none of them reliably produced what we’d consider acceptable investigation performance. And this matches what Microsoft observed at the high end: even with best efforts and strong models, 50–60% success rates appear, depending on technique and scoring.

That might be impressive for research. It is not acceptable for an operational SOC capability.

The Training Data Looked Like “Just Words” (Until It Didn’t)

Microsoft’s provided training dataset includes:

- Context

- Question

- Answer

- Solution details (the investigative path)

On its surface, it can feel unhelpful because it reads like narrative text. The “solution” is described in words, not delivered as a machine-executable investigation recipe. But that dataset contained something valuable: repeatable multi-step investigation patterns. We needed a structure that could represent those patterns in a way an agent could reliably follow.

Why We Moved to Directed Acyclic Graphs (DAGs)

After a lot of iteration, we landed on a concept that security teams already understand intuitively, but rarely formalize for AI agents: Directed Acyclic Graphs (DAGs).

What is a DAG (in practical terms)?

A Directed Acyclic Graph is:

- A set of nodes (things like alerts, users, hosts, processes, IPs, hashes, investigation steps, intermediate answers)

- Connected by directed edges (relationships that have a direction: A leads to B, B pivots to C)

- With no cycles (you can’t loop forever; the structure always moves forward toward an outcome)

In SOC terms, a DAG is a structured representation of an investigation path where each step depends on the prior step’s outputs.

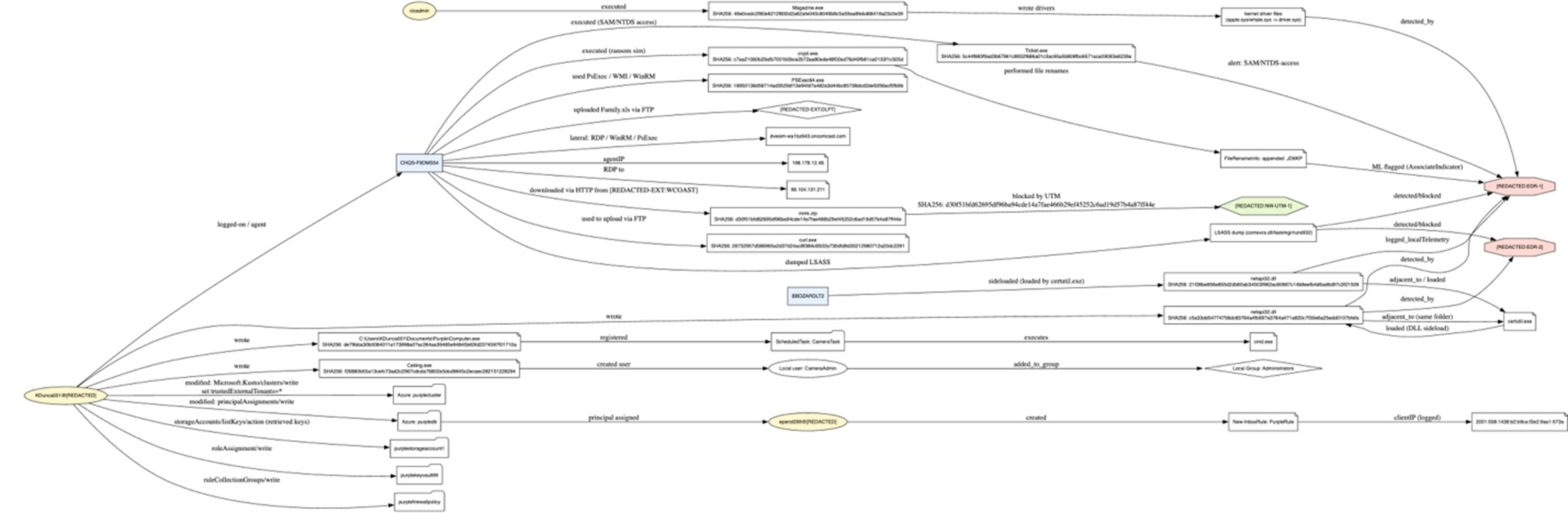

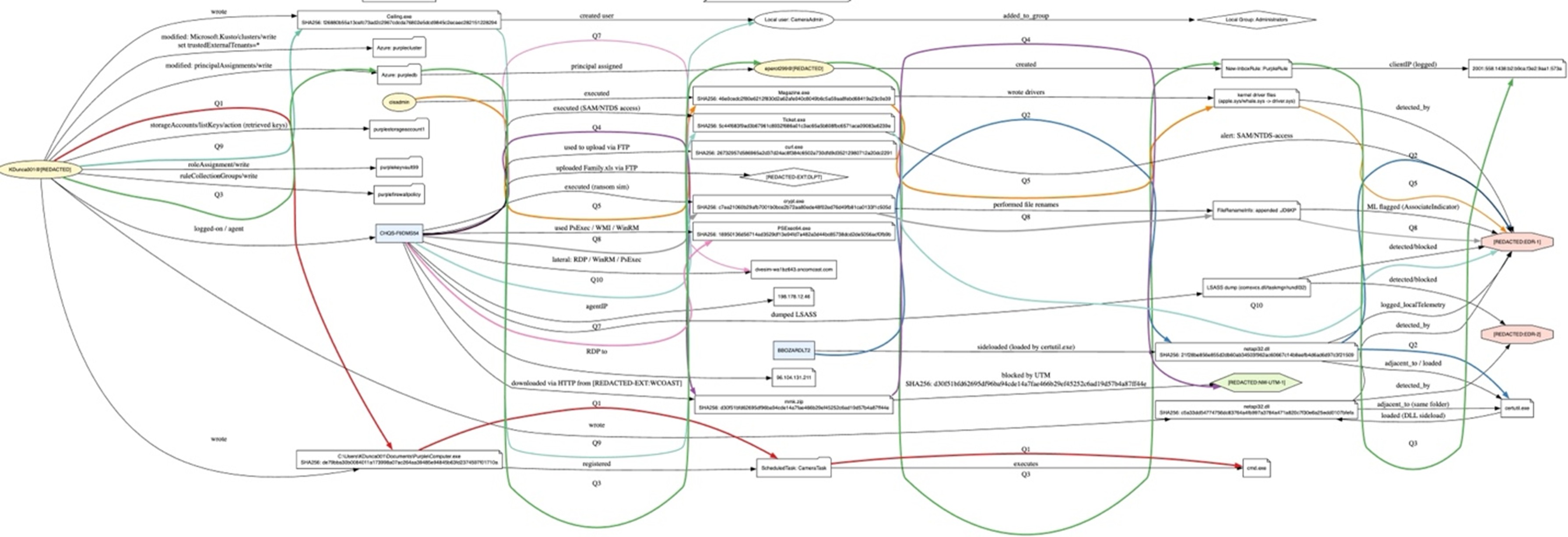

If you visualize an attack chain or a MITRE-style progression, you already think this way. The difference is that a DAG makes the investigation path explicit and machine-navigable: it becomes a “recipe” for how to move from any known data point to the target answer via intermediate pivots. Below is a graph visualization representing aspects of an attack. Technically speaking, we can extract attributes from a dataset like this to create our DAG solution patterns.

Below is a similar graph, with different colored lines representing solution paths to various questions, revealing each data point we need to gather to go from one node to the next to get the desired solution.

Why DAGs matter for SOC agents

The key property we want from the DAG is simple: If we know any one data point on the graph, and we have a question about any other data point, the DAG provides a step-by-step method via queries and pivots to reach the solution.

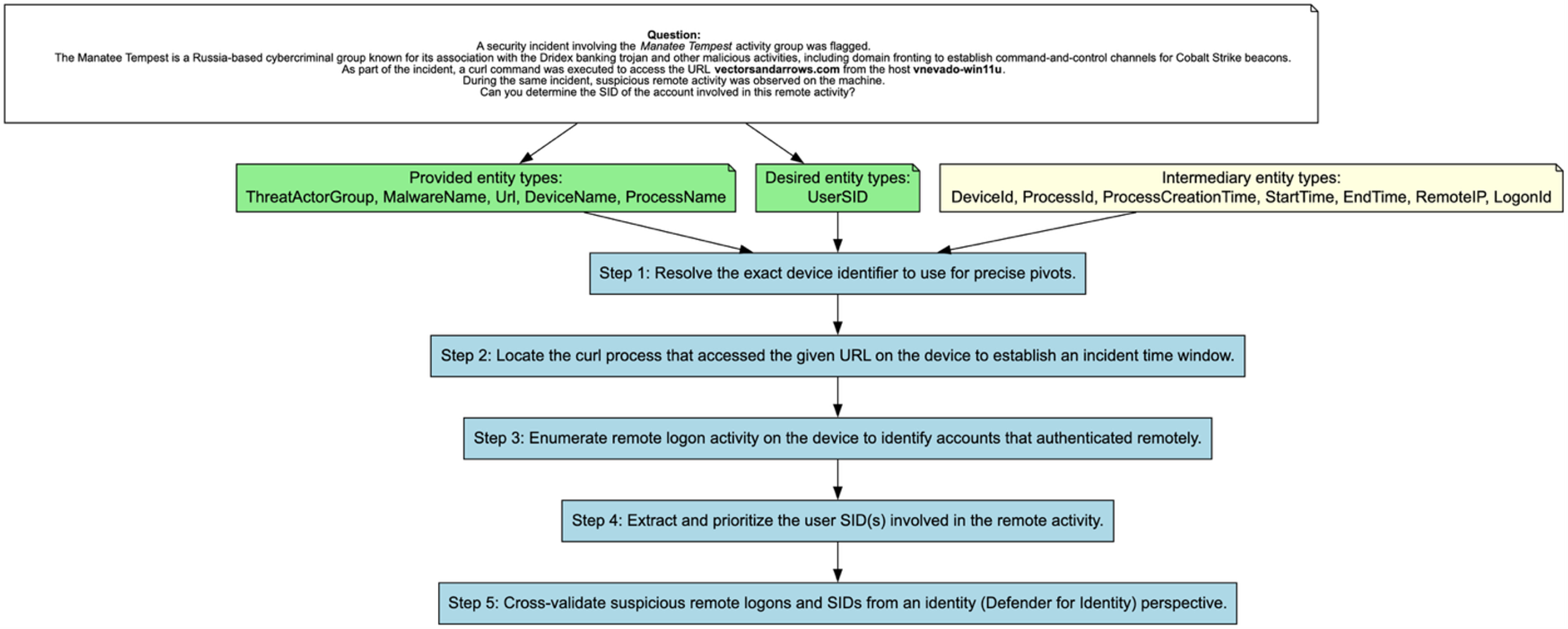

For example a question posed by the framework;

A security incident involving the Manatee Tempest activity group was flagged. The Manatee Tempest is a Russia-based cybercriminal group known for its association with the Dridex banking trojan and other malicious activities, including domain fronting to establish command-and-control channels for Cobalt Strike beacons. As part of the incident, a curl command was executed to access the URL `vectorsandarrows.com` from the host `vnevado-win11u`. During the same incident, suspicious remote activity was observed on the machine. Can you determine the SID of the account involved in this remote activity?

A Logical Solution Path:

- Identify the unique id of the device identified in context

- Find the curl process executed on that device during the time window

- Identify all logon activity during that time window

- Identify the SID involved for the account that ran the curl command

- Validate SID suspicious logons with Identity Security tools

This transforms an agent from searching to executing a proven investigation pattern. An example of a human-readable DAG is shown below, with our original context and question in white. In green is our known entity types, showing that we have in our knowledge base (such as data coming from an incident) a fixed set of facts, and that we desire another fact type. Our blue boxes represent an information gathering collection of steps that lets us traverse from our known facts to our desired facts. This is the type of aid a DAG can provide to our agents.

Our Technique: Build a Library of Solution DAGs and Retrieve the Right One at Runtime

With that insight, we built a technique in SCALR AI to:

- Use AI to extract/construct a library of DAGs from Microsoft’s training solution data (representing different investigation solution patterns).

- Vectorize those DAGs (store them as embeddings so they can be searched by similarity).

- At runtime, retrieve the best-matching DAG patterns for the current question/context.

- Provide those patterns to the agent as investigation scaffolding, so it follows a known-good pivot path rather than improvising.

The result: over a 90% success rate on first trial in our testing.

That’s the difference between interesting demo and operationally viable investigation capability. It’s exactly the type of strategy we expect to deliver for clients: structured, measurable, and tuned to the realities of SOC work.

The Most Important Takeaway: Agent Training Is a Fingerprint

Here’s the point I want to land as clearly as possible:

Every successful agent training program is like a fingerprint. It reflects your SOC, your tools, your process, and your data.

Anyone selling an AI SOC solution that doesn’t build a bespoke design factoring in those variables is selling you something templated. It might look good in a generic demo environment, but it will not be optimized for success inside your organization.

Next Up (Part 5): Continuous Training + Continuous Evaluation Becomes a SOC Capability

In the next (and final) post in this series, I will explain why the future AI-augmented SOC requires two permanent capabilities:

- Continuous evaluation (you need to measure agent performance like any other control)

- Continuous training (you need to improve workflows and memory as your environment changes)

This is not a one-time deployment task, it’s an ongoing job role. SOCs will increasingly hire (or contract) for the ability to engineer, maintain, and govern investigative agents with the same rigor they apply to detections and incident response.

We’ll show what that capability looks like, why it matters, and how to build it.

Mike Pinch

Mike is Security Risk Advisors’ Chief Technology Officer, heading innovation, software development, AI research & development and architecture for SRA’s platforms. Mike is a thought leader in security data lake-centric capabilities design. He develops in Azure and AWS, and in emerging use cases and tools surrounding LLMs. Mike is certified across cloud platforms and is a Microsoft MVP in AI Security.

Prior to joining Security Risk Advisors in 2018, Mike served as the CISO at the University of Rochester Medical Center. Mike is nationally recognized as a leader in the field of cybersecurity, has spoken at conferences including HITRUST, H-ISAC, RSS, and has contributed to national standards for health care cybersecurity frameworks.