What is a Purple Team Assessment?

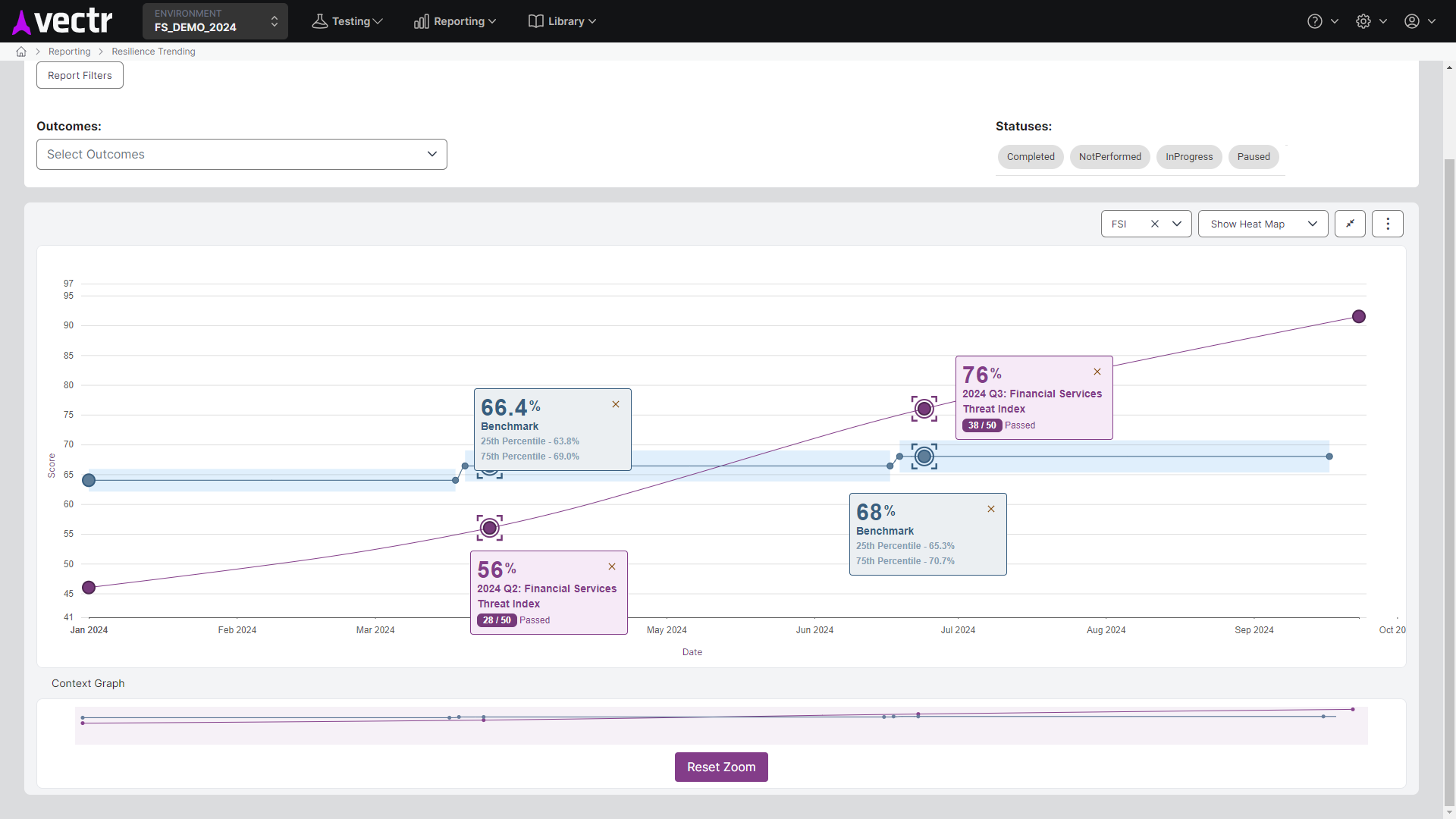

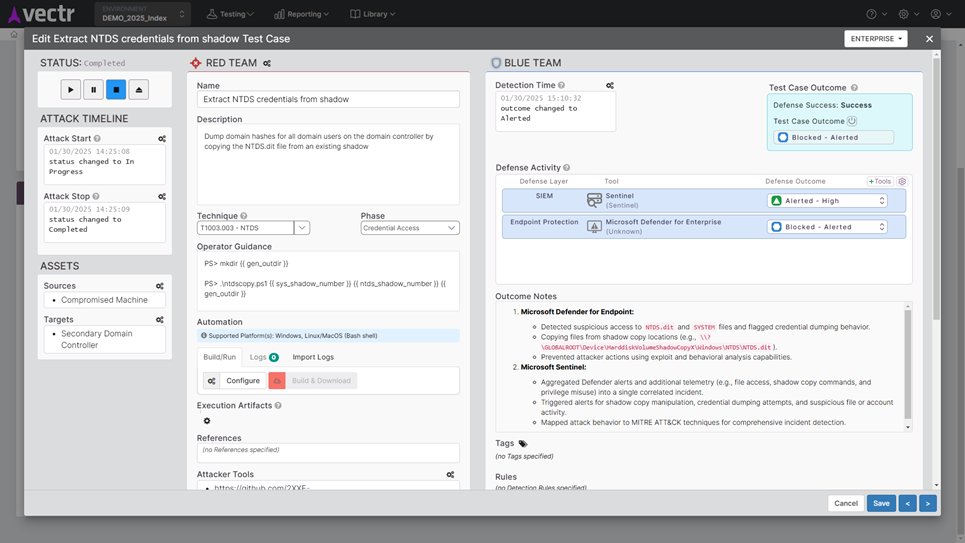

A Purple Team is a collaborative open-book assessment that prioritizes and produces quantifiable improvements in threat resilience over time. Guided by a facilitator, or “MC,” in a live workshop setting, Red Team operators execute announced attacks to simulate adversaries’ known TTPs, while Blue Team analysts monitor and track the resulting logging, alerting, and blocking outcomes of the organization’s defensive tools.

We believe purple teams are the best way to measure the effectiveness of cyber defenses, and we recommend a calendarized testing cadence to drive continuous improvements to your prevention and detection controls.

Collaborative.

Quantitative.

Mission Oriented.

How can Purple Team Assessments strengthen your security posture?

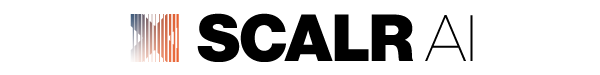

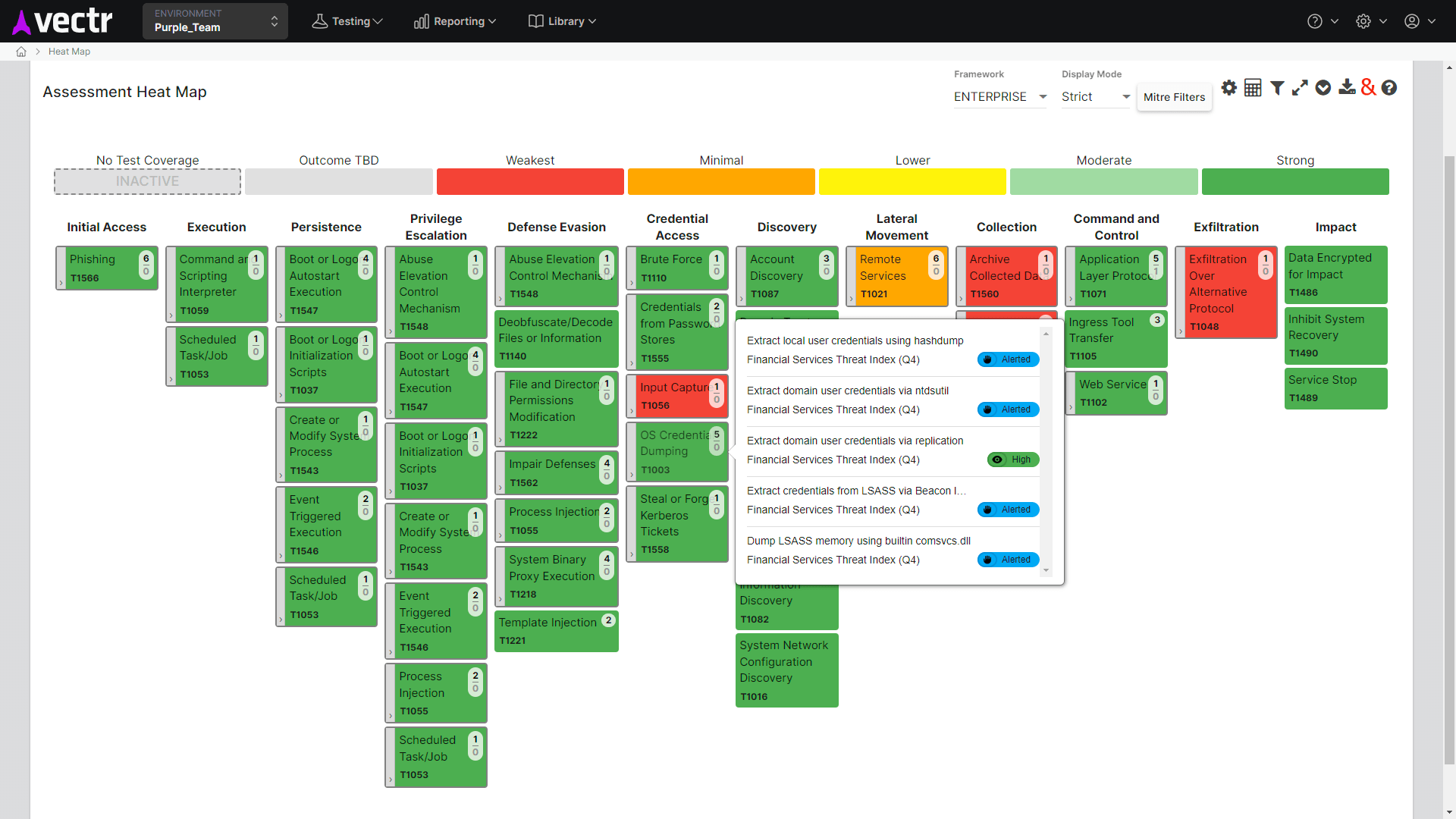

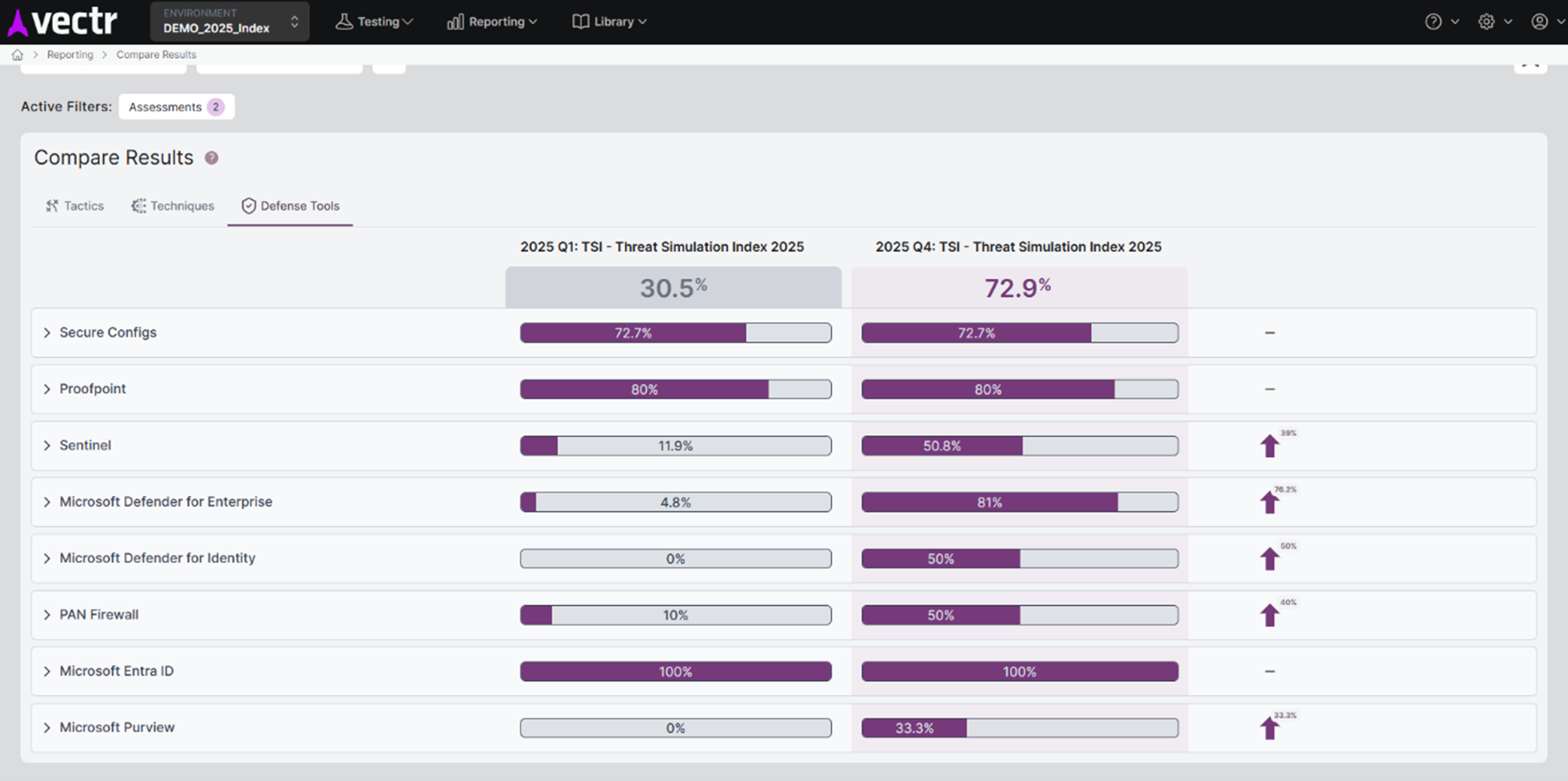

The SRA approach to Purple Teams uses our VECTR platform to track simulated attacks, log outcomes, and generate quantifiable Threat Resilience Metrics. This data can be used to benchmark performance against industry averages, map performance to the MITRE ATT&CK Framework, and visualize threat resilience over time.

The Purple Team process with VECTR can help your organization strengthen its security posture:

Benchmark and build a roadmap

Understand your threat resilience relative to peers, identify and prioritize remediation activities

Inspect what you expect

Execute TTP’s across ATT&CK Tactics to validate if your security tools and processes are doing what they are supposed to do

Intel-driven testing

Use the Threat Index and build other intel-informed test plans that use the TTPs that your adversaries are using

Test and benchmark your threat resilience.

SRA are the authors of VECTR™ the only free, metrics-driven Purple Teams platform. VECTR enables us to use the Threat Index as an intel-driven, prioritized, and benchmarked test plan which helps you align your organization in a practical way with MITRE ATT&CK and answers the question “how do we compare with our peers?”

We work side by side with your team to test your controls, maximize knowledge transfer, agree to improvement priorities, and promote co-ownership of the results. Purple Teams build resilience and readiness for real-world threats!

Why SRA?

- SRA is an industry leader in Purple Team thought leadership and testing methodology. You can read our blogs about Purple Teams here. We collaborate with executive leadership to present quantifiable improvement in threat resilience at tradeshows and forums including RSA, H-ISAC, FS-ISAC, RH-ISAC, and more.

Read more of our Purple Team publications here:

Frequently Asked Questions about Purple Teams

Hidden

Your content goes here. Edit or remove this text inline or in the module Content settings. You can also style every aspect of this content in the module Design settings and even apply custom CSS to this text in the module Advanced settings.

What are the benefits of a Purple Teams Assessment?

A Purple Team Assessment enhances an organization’s detection and response capabilities, validates cybersecurity controls, and provides metrics using tools like VECTR, aligning with frameworks such as MITRE ATT&CK.

What is the difference between a Purple Team and a Penetration Test?

A Penetration Test identifies vulnerabilities and risky configurations that could allow access to sensitive data or cause business impact. A Purple Team is focused on visibility not vulnerability. It assumes vulnerabilities exist at a point in time, so instead focuses on the capability of security tools and processes to respond to threats.

How does a Purple Team Assessment differ from a Red Team Assessment?

Unlike a Red Team assessment, which focuses on simulating real-world attacks covertly, a Purple Team assessment is collaborative and transparent, with the goal of improving defenses in real-time. Red Team assessments evaluate Incident Response procedures and capabilities, where Purple Team assessments evaluate detection efficacy. Purple Teams are structured, regular training to perform better during Red Teams (and in facing real adversaries).

What frameworks or methodologies are used in Purple Team Assessments?

MITRE ATT&CK is the most directly applicable, but Purple Teams are excellent support for evaluating the Protect and Detect functions in NIST CSF.

What should we test for in a Purple Team Assessment?

SRA facilitates creation of the free, shared Threat Index test plan every year. The test plan is formed in collaboration with 120+ organizations across sectors and focuses on the latest threat groups and TTPs. The Index is a prioritized purple team test plan which establishes a common ground for MITRE ATT&CK alignment and peer Threat Resilience Benchmarks™ (TRB).

SRA also maintains test plans for other Purple Team topics such as Azure, Entra ID, AWS, GCP, Kubernetes, Linux, Mac, etc.

What are the deliverables of a Purple Team Assessment?

A detailed report outlining tested attack techniques, detection gaps, recommendations for improvement, and a roadmap for enhancing security operations. SRA’s reports also include metrics and visualizations from the VECTR platform, including a heat map of MITRE ATT&CK coverage, benchmark data, and a historical trending chart to show how your organization’s Threat Resilience Metric improves over time.

Who should participate in a Purple Team Assessment?

Members of the Red Team, members of the Blue Team – including teams such as engineering teams and the Security Operations Center (SOC) team – and other relevant stakeholders. SRA will lead the assessment with an “MC”, and our consultants work side by side with your in-house team to perform the testing.

How often should Purple Team Assessments be conducted?

We recommend minimally twice per year to get started, and for organizations seeking more regular benchmarks and content detection sprints, four times per year.

Related Blogs

Building Accessibility into VECTR

Discover how Security Risk Advisors integrated accessibility into VECTR, enhancing usability for keyboard navigation and screen readers while meeting WCAG AA standards. Learn about the challenges and solutions in building inclusive cybersecurity tools.

Threat Simulation Index 2026 Release

The 2026 Threat Simulation Index (“Threat Index” or TSI) is a Threat-Driven Test Plan built annually with 100+ organizations across sectors. It changes annually so that it can reflect updated threat groups, software, and active TTPs used by adversaries. The Threat Index includes 55 test cases, applicable to any industry, and can be used to establish a common ground and prioritization for alignment with MITRE ATT&CK and to measure threat resilience against an industry benchmark.

Microsoft Ignite 2025: The 6 Security Announcements Shaping 2026

Microsoft Ignite 2025 introduced six pivotal security updates, including AI governance tools, passwordless authentication, and autonomous threat response. Discover how these innovations can transform your security operations in 2026.