Security operations aren’t struggling because analysts are tired, they’re struggling because modern environments have outgrown what humans can process. Cloud-native systems, dynamic identities, ephemeral compute, and globally distributed SaaS have made today’s attack surface too complex and too fast moving for manual, human driven workflows to keep up.

At the same time, the business now operates in real time. Deployments ship continuously, infrastructure scales instantly, and customer impacting changes happen in seconds. Yet security decisions still take hours or days. This creates a widening gap where attackers move at machine speed while defenders operate at human speed.

This isn’t an alert fatigue problem, it’s simply that humans can’t process information as fast as today’s systems generate it.

SOAR was traditionally the answer, however with the advent of AI, automation capabilities and maturity has drastically changed. AI offers a way to help close that gap, not by replacing human judgment, but by supporting it. The right level of automation depends on each organization’s risk tolerance, culture, and maturity. In this blog, we’ll break down the different levels of AI powered automation and show how Security Operations teams can choose the level that aligns with their goals, comfort, and operating model.

Advancements in AI call for an update into how we introduce and mature automation with the SOC (and across the organization). As a SOC leader for more than the last five years, I saw the need to introduce a new model for automation in SOC that will allow CISOs to better talk about how AI fits and gives them the ability showcase progress and map goals to standardized model. So let’s take a look at the newest model for viewing automation in SOC!

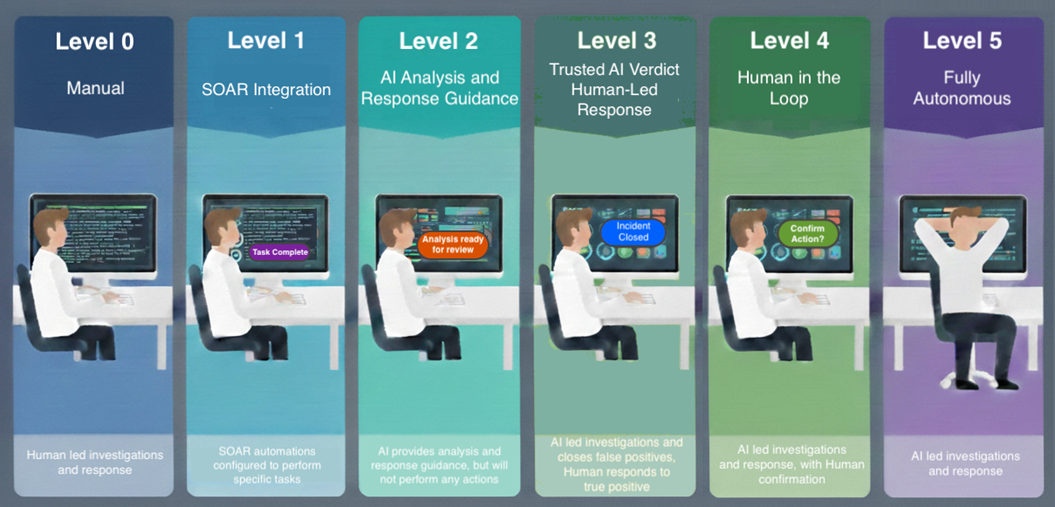

Levels of Automation with AI

I developed the graphic below to help not just SRA, but the industry better understand the possibilities of AI as an automation platform, and a reasonable path to get them to the level that fits their business needs and objectives. This has given us, and our clients the ability to better highlight where they are on their AI journey, and where they are headed. Let’s deep dive each level to gain a better understanding.

Level 0 – Manual

Many organizations are at Level 0 or Level 1 in terms of automation. While tools may feature AI-enhanced detection logic, analysts typically do not interact directly with AI. Without an analyst-facing chat interface or integrated AI workflows, investigations remain slow and reactive.

Pre-AI automation helped at times, but these static workflows couldn’t adapt in real time. They accelerated a few tasks but still produced more data than analysts could reasonably consume, leaving a significant gap between system generated insights and human capacity.

Level 1 – SOAR Integrations

The original promise of SOAR was straightforward: accelerate investigations, reduce mean time to respond (MTTR), and help understaffed SOC teams scale by automating repetitive, manual tasks. By integrating SIEMs, ticketing systems, threat intelligence platforms, and security controls, SOAR platforms aimed to orchestrate workflows that could triage alerts, enrich context, and execute predefined responses faster and more consistently than humans alone.

In practice, however, SOAR often fell short of its transformative goals. These platforms proved expensive to deploy and maintain, required heavily customized integrations, and demanded significant engineering effort to keep playbooks operational as tools, APIs, and environments changed. Many organizations attempted to automate complex, edge case heavy incidents first, rather than starting with simple, high confidence use cases. This led to brittle workflows, false confidence, and frustration. As a result, many SOCs today technically “have SOAR,” but use it narrowly for a small handful of semi-automated playbooks that are not continuously optimized. Level 1 automation improved efficiency at the margins, but without intelligence or adaptability, it struggled to keep pace with the growing complexity and volume of modern security operations, setting the stage for AI-driven approaches in higher maturity levels.

Level 2 – AI Assisted Analysis and Response Guidance

There are two common entry points into Level 2:

- Analyst Driven AI Assistance: Analysts interact with AI through a chat interface, asking questions and receiving contextual analysis, or response guidance on demand.

- AI Enhanced Automated Workflows: AI is inserted into SOAR or workflow engines to autonomously analyze events and enrich data without human prompting. Analysts open an incident and AI has already enriched and performed a baseline analysis, or recommended next steps to respond to the incident.

Integrating AI into workflows is a foundational step toward higher levels of automation. It meaningfully reduces investigation times and establishes trust in AI-generated analysis.

Level 3 – Trusted AI Verdict, Human-Led Response

Once AI has demonstrated reliable accuracy in analysis and guidance, organizations can allow it to autonomously close false positives. At this stage:

- Analysts have already reviewed weeks or months of AI output.

- You have measurable accuracy, quality, and context completeness data.

- AI’s precision is comparable to that of a human analyst. (AI doesn’t have to be 100%, but it needs to be at least comparable to your human analysts)

Early on, organizations typically implement review processes to ensure AI is closing incidents appropriately, much like quality assurance for human analysts. At this level we trust that AI is making the proper determinations, but we aren’t ready to let it loose and perform actions that could affect business operations.

Level 4- Human in the Loop Response

At Level 4, AI can take remediation actions, with a human providing final approval. This is often the most anxious leap for SecOps teams because AI now has the potential to affect users, systems, and operations.

The human in the loop model provides a safeguard: AI identifies the action, provides justification, and executes the response only after human approval.

Additional benefits AI carrying out response, even with human approval slowing up that process:

- Speed: API driven remediation is dramatically faster than manual action.

- Accuracy: AI is less likely than humans to skip steps or make clerical errors.

Level 5 – Fully Autonomous

At the final level, AI operates independently within a defined scope:

- It investigates incidents

- It takes action

- It closes cases — all without human intervention

This doesn’t eliminate analysts. Instead, their work shifts toward:

- Threat hunting

- Complex investigations

- Enhanced detection engineering

- Improving and training AI systems

Human oversight continues through quality review, but analysts no longer need to manually process routine or low risk events.

What’s Next

Once you’ve identified the automation levels, the next step is building a realistic, structured adoption plan.

1. Determine Your Goal Level

Not every organization will, or should move to Level 5. Some industries require multiple approvals before action, making fully autonomous remediation impossible. That’s okay.

The goal is to define your ceiling, not to force every incident type to reach it.

2. Set Goals and Targets for Each Level

You don’t need to move every alert to the same automation level. In fact, you shouldn’t.

As you set targets, consider:

- Incident Review: Which incident types require the least context and have clear patterns? These are ideal candidates for higher automation levels.

- Response Review: Document required actions and frequency. The more consistent and predictable a response is, the easier it is to automate safely.

- Documentation Inventory: Runbooks and playbooks significantly accelerate AI onboarding. The more you have, the faster your models can become accurate.

- Tooling and Data Sources: Identify the tools and datasets required for investigations. Prioritize integrations that support the majority of cases rather than outliers.

Example target over the next six months:

- 75% of alerts reach Level 2

- 30% reach Level 3

- 10% of alerts in Level 4

Clear goals prevent the initiative from losing momentum or stalling due to perfectionism.

3. Execute the Plan

Once the roadmap is set, it’s time to build:

- Integrations for the data AI needs

- Prompts and agent logic

- Continuous refinement pipelines

- Testing and QA gates

- Analyst training for AI oversight

AI is not “set and forget” just as analysts learn continuously, AI requires ongoing tuning to maintain accuracy and avoid drift.

Measuring Progress

AI automation in the SOC is a maturity journey, not a switch. Without clear metrics, teams rely on perception (“it feels faster” or “we trust it less”) or risk advancing automation beyond their risk tolerance. Well defined KPIs provide objective evidence to guide decisions, build trust, and ensure automation progresses at an appropriate pace.

Measurable outcomes benefit both SOC teams and executive leadership. For analysts, KPIs establish clear criteria for tracking improvement. For CISOs, they enable transparent reporting on risk reduction, operational efficiency, and AI maturity to executive stakeholders.

While specific metrics will vary by organization, the following KPIs are broadly applicable and effective at demonstrating the impact of SOC automation:

MTTD / MTTR (Mean Time to Detect / Respond)

These metrics reflect how quickly the SOC identifies and responds to threats. As incidents progress through higher automation levels, organizations should see reduced detection and response times translating to lower business impact and reduced operational disruption.

- Further refining these to compare incident types pre and post automation level can highlight which levels provide the most impact (lowering of MTTR and MTTA the most) and allow you to prioritize your efforts to provide maximum impact. For instance, if Level 2 (Assisted Analysis) is showing to cut your MTTR by 50%, and Level 3 (Trusted AI Verdict) is only cutting MTTR by 10%, it may make sense reevaluate your goals and prioritize getting more incidents into the Level 2 before refocusing your teams limited resource into Level 3.

Quality Outcomes (False Positives / False Negatives)

Quality assurance must be in place before introducing automation. Tracking false positive and false negative rates ensures increased speed and throughput do not come at the expense of detection accuracy. Improving efficiency while missing attacks creates material business and financial risk.

Analyst Effort Saved and Cost Avoidance

Measuring analyst hours saved at each automation level highlights operational efficiency gains. When translated into estimated cost avoidance, this metric helps demonstrate return on investment and shows how automation enables analysts to focus on higher value work.

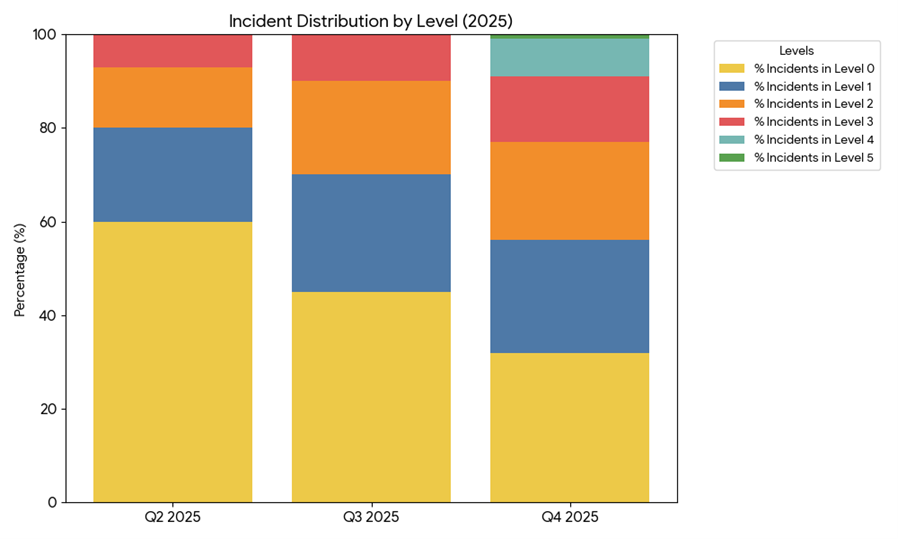

Percentage of Incidents by Automation Level

Tracking the distribution of incidents across automation levels (0-5) provides a clear view of progress toward the target state. This metric reinforces accountability and ensures automation advances in line with defined goals.

- Depending on your ticketing system’s capabilities, you can utilize automated tagging or labels to identify the level that each incident goes through. This will allow you to easily pull metrics, like the ones below, for your incidents in real time.

Quality Tracking

Quality is foundational to an effective SOC and will be critical to implementing automation. Before beginning any AI maturity journey, SOCs must have a formal quality assessment program. Without a baseline, organizations cannot effectively tune AI, compare performance, or detect degradation in outcomes, significantly increasing the risk of missed attacks.

At SRA, our QA program evaluates AI output using the same standards applied to human analysts. Treating AI as another SOC contributor allows us to validate performance to ensure quality remains consistent as AI is introduced and refined.

Organizations without a QA program should prioritize building one and begin measuring current analyst performance. This is not intended to penalize analysts, but to identify training gaps, documentation issues, and tooling limitations. Establishing a current state baseline is essential for meaningful comparison.

Expecting AI to be perfect when human performance varies is unrealistic and often stalls progress. While AI can improve consistency and thoroughness, it is not infallible. Without quality governance and clear benchmarks, organizations will struggle to mature automation safely and achieve their intended outcomes.

Wrap Up

Modern environments generate data at a pace no human team can keep up with. Traditional SOAR solutions tried to close the gap but lacked adaptability and context. AI, when implemented thoughtfully, is positioned to finally deliver what SOAR promised.

Organizations across industries are being asked for their AI strategy, and the SOC is no exception. These automation levels provide a practical, transparent framework for introducing AI into SecOps without overcommitting or overselling.

SRA has been working at the intersection of AI and security operations for years, and we’ve seen the challenges organizations face firsthand. Whether you need help building your plan, developing capabilities, or operating the entire SecOps stack, we’re here to help.

We’re all working toward the same goal: keeping the world safe from attackers with the speed and precision modern environments demand.

Greg Stachura

Greg focuses on Incident Response and the Cyber Security Operations Center. Greg has experience managing SIEM, as well as orchestration and automations platforms. He also has extensive background in Incident Response playbook development, forensics and log analysis. Prior to joining Security Risk Advisors, Greg worked extensively in the financial, healthcare and education sectors.