TL;DR

You can describe the progress of your cybersecurity program in a single, threat-driven metric: the Threat Resilience Metric. This metric is born from prioritized MITRE ATT&CK alignment and can be benchmarked with your peers.

Prelude: NIST CSF and MITRE ATT&CK

NIST CSF is a high-level framework that wants to describe an entire security program including governance, preventative, detective and restorative controls. It does not provide detailed guidance to assess the ability to defend against advanced threat actors. Teams perform self-assessments and often ask outside parties to conduct independent ones, using this same lens. Professional judgment, as in any field, varies.

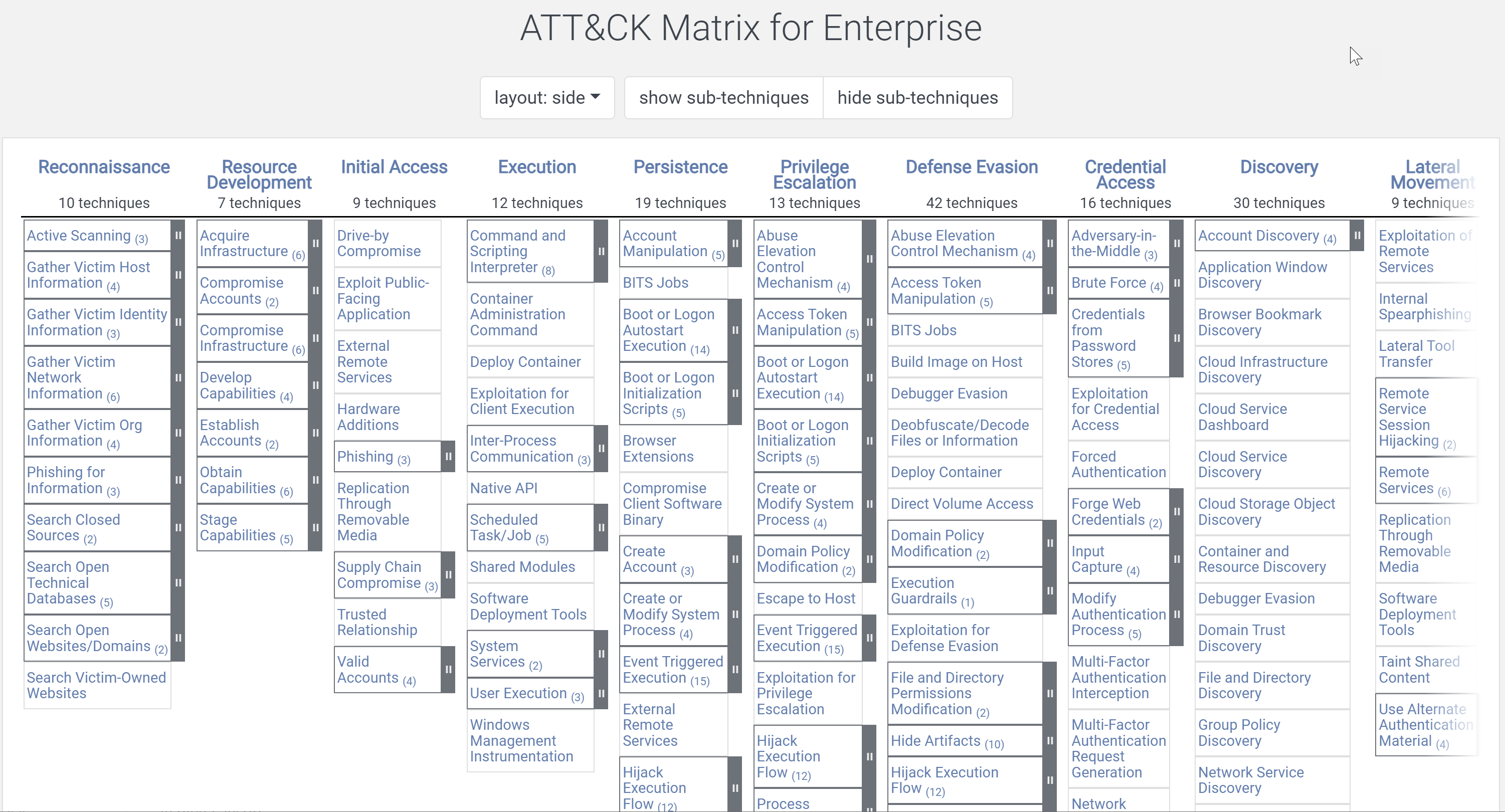

MITRE ATT&CK is a highly detailed framework that describes threat actor attack techniques. Most organizations who conduct Purple Teams create their test plans based on focus areas they choose within ATT&CK. Teams interpret ATT&CK and create their test cases. Professional judgement, experience and other threat intelligence comes into play when designing a specific test case. And once again, internal and independent perspectives are welcome and vary.

The Challenges with MITRE ATT&CK

MITRE ATT&CK:

- Changes often and is hard to keep up with. ATT&CK is updated twice per year which is fast for a framework. NIST CSF in contrast is rarely updated. If a team is observing ATT&CK’s entirety, it’s a Sisyphean task. And if you’re tracking, you’ve just realized…

- It’s not pre-prioritized for you. There are some techniques that apply to everyone and many more that do not, and they are not distinguishable without considerable knowledge & experience.

- Security industry vendors have co-opted it. And this means they’re telling teams that “our solution meets 100% of MITRE ATT&CK.” Honestly, you should know better than to entertain that. There can be a version of the truth where a detection platform says they have at least one analytic for each ATT&CK technique. But take the example of Account Discovery – is there just a single way of doing that? Remember, this is an expanding universe. Another version of the truth is a SIEM that says it can intake any log, and therefore can detect any technique, and will even show you a map of how it is poised to do that. That view in your SIEM is not validated. In security, we need to inspect what we expect. In other words, don’t trust anything you don’t test. And on that topic…

- Does not provide detailed self-assessment methods aka test cases. There is a reason: it’s hard to say one way is the right way. For example, there are many parameters in a brute force password attack. How many password attempts comprise a brute force? One team may select 500 and another chooses 5. 500 is easier to detect, but is it really what threat actors do? Which threat actors?

- Does not give you a way to document your team’s test cases and results. But I do give you a way. Start with the free VECTR.io. Security Risk Advisors publishes it on Github.

- Does not give you a way to benchmark with your peers. And for as hopeless as that sounds given these challenges, please hang in there for just a little more suspense, because we need to talk about metrics for a moment.

Your Metrics Don’t Tie Back to Threat Actors

A lift & update from my 2019 blog on this topic, with a little expansion. Your metrics aren’t close enough to threat actors. You have no really good way to measure your progress on MITRE ATT&CK, let alone benchmark with your industry and peers. What you generally have is these:

- Hygiene Metrics: Systems missing patches, system with high risk vulnerabilities, systems missing AV, systems that are business-critical, and 35 ways to describe the intersection of time and those four conditions. I’m not saying this isn’t important, but I am saying it doesn’t fully describe how susceptible to attack you are, or how well you are defending these systems. Keep your hygiene metrics, the best ones only, and read on.

- Compliance Metrics: Can you disable a user account in 24 hours? Do you have an accurate inventory of all your assets? Do you document your changes and releases? What kind of fire suppression system is in your data center? Are you doing the things your auditor told you to do? Does your auditor have actual experience hacking or defending a network? Unlikely. Do whatever you need to do to avoid fines, but those same things won’t avoid threat actors.

- Hyperbole Metrics: These are the huge numbers that mean nothing. Our organization defended against 3 billion attacks last quarter! Santé! Actually, the NGFW and email gateway just dropped them. Those “attacks” were untargeted, not a serious threat and not applicable to systems inside the firewall. Hyperbole metrics can also give a wrong sense of a team’s actual work efforts. A team spends a LOT of time preventing and responding to a much smaller volume of more significant events. But it’s hard to draw attention to 30 when there is a 3,000,000,000 in the same dashboard. We painted ourselves into a corner with hyperbole metrics, because now the Board thinks they actually mean something and they expect to see them. If we can transition to something more meaningful, we can phase out Hyperbole metrics.

- SecOps Metrics: It makes sense to trend types of incidents, tickets worked & closed, etc. It helps us adjust resources and tuning priorities. But while I’m at it, can we stop being obsessed with DWELL TIME? You can’t consistently capture it unless you’re consistently getting compromised! I sincerely hope that’s not a monthly, quarterly, or even annual thing for you.

Metrics that Tie Back to Threat Actors

In 2019 I first wrote about the concept of Threat Resilience Metrics (TRM). Their raison d’être is to fill the “so what” gap in hygiene and hyperbole metrics, and to help teams establish their measurement of a Threat-Driven security program, as opposed to compliance, politics, or any other thing less important than protecting the organization against threat actors.

Threat Resilience Metrics: WHAT – WHEN – HOW?

WHAT?

- A purposeful, prioritized way to align to MITRE ATT&CK.

- A way to describe your security program’s readiness to protect, detect and respond to threat actors.

- A way to obtain a meaningful benchmark in your industry or smaller circle of trust.

WHEN?

- Now.

- You (maybe): But we’re not mature enough.

- Me (always): You won’t be mature until you take your first snapshot and understand where your controls can be proven to block, detect and create actionable alerts for your SOC.

HOW?

- Purple Teams. Call it something else if you must (adversary simulation, etc.).

- Collaborative, open-book work with red, blue, and GRC all weighing in on test case outcomes (GRC should redefine its scope to be a part of these types of exercises – more on that another time).

- Limited automation. Sorry vendors, this is not a single button click.

- The core of the TRM is a single value, a percentage. Specifically, the percentage of test cases “passed”.

Metrics that Tie Back to Threat Actors, How?

Start with a common denominator. In order to prioritize alignment to MITRE ATT&CK and specific threat actor techniques, we need to agree to which techniques matter the most. We’ve tackled this and have a solution to share with you. The solution is an Index of MITRE ATT&CK which is a representative sample of ATT&CK and does not try to emulate too much, and certainly not all of it. It’s an Index just like the S&P, the Dow Jones, etc. It changes over time with threats but never tries to describe every threat at once.

Starting in early 2020, we drew together friends from organizations you would know, specifically friends who care about threat intel, hunting, red, purple and blue. This group agreed to the top five threat actors targeting their sector. We went to MITRE ATT&CK and filtered on the techniques that ATT&CK attributed to those threat actors. Thankfully we didn’t stop there or I’d have nothing but a chart for you The next essential step was to create specific, repeatable test procedures for each of those techniques, down to the command line level where needed. We did that, incorporated the group’s feedback, and released v1. We created a shared purple team / threat emulation plan that would be consistently performed and repeatable across organizations. The original Index was 56 test cases. Sound like the basis for a hyperbole metric? Absolutely not.

Find out more about the Threat Index here.

Threat Resilience Metric Over Time

A snapshot is useful, but the powerful story of Threat Resilience Metrics is historical trending. We’ve found quarterly purple teams propels this measurement in most organizations. Executing a compact purposeful test plan is the fast part – remediation takes more time.

Connecting Purple Teams to APT Resilience

VECTR®’s users love the dynamic MITRE ATT&CK heatmap that can also represent the team’s Threat Resilience Metric. Organizations making the most use of VECTR® continue to add their own test cases and this becomes a library / repository to document broader-purpose security testing.

MITRE heatmap in VECTR® filtered to demonstrate coverage against APT 29

A powerful feature is the ability to filter test cases by any threat group described in ATT&CK. This facilitates answering senior management questions like, “I just read about APT29, what have we done to prepare?”

Getting Started

- Get VECTR® . It’s free or inexpensive via Azure Marketplace. Join the VECTR Squadron and Discord channel for tips & tricks.

- Download the Threat Index and try it. If you don’t have the skills or want someone independent, call SRA or another great firm.

- Start or adapt your Purple Teaming with metrics in mind. Capture your first Threat Resilience Metric. Maybe wait till you’ve done it twice before you go big with it, you’ll tell a better story of how you’ve already improved and have a roadmap for what’s ahead. You just might also develop some quantitative support for your next budget ask.

Tim Wainwright

Tim has been a speaker at RSA, Gartner, FS-ISAC, H-ISAC and (ISC)2 National Congress. Tim helped found Security Risk Advisors in 2010. Tim advises CISO Offices on modernizing cybersecurity strategy to improve governance, communication, team culture and growth, detection and response capabilities. Tim is a thought leader in the area of purple teams and attack simulation and metrics to describe quantified threat resilience. Tim has a background in penetration testing, security assessment, and frameworks.